AI for Code Review in 2026: Tools, Practices, and Limitations

How to use AI tools for effective code review without replacing human judgment. Comparison of CodeRabbit, Claude Code, and Copilot review features.

Advertisement

Google AdSense — ad code will be placed here after approval

AI code review has crossed a credibility threshold in 2026. It still cannot replace a senior developer's architectural judgment, but it reliably catches 60 to 80 percent of mechanical issues — freeing human reviewers to focus on what matters: design decisions, business logic correctness, and long-term maintainability.

I have run AI code review tools alongside human review on every pull request for six months. Here is what works, what does not, and how to integrate AI review into a workflow that actually improves code quality.

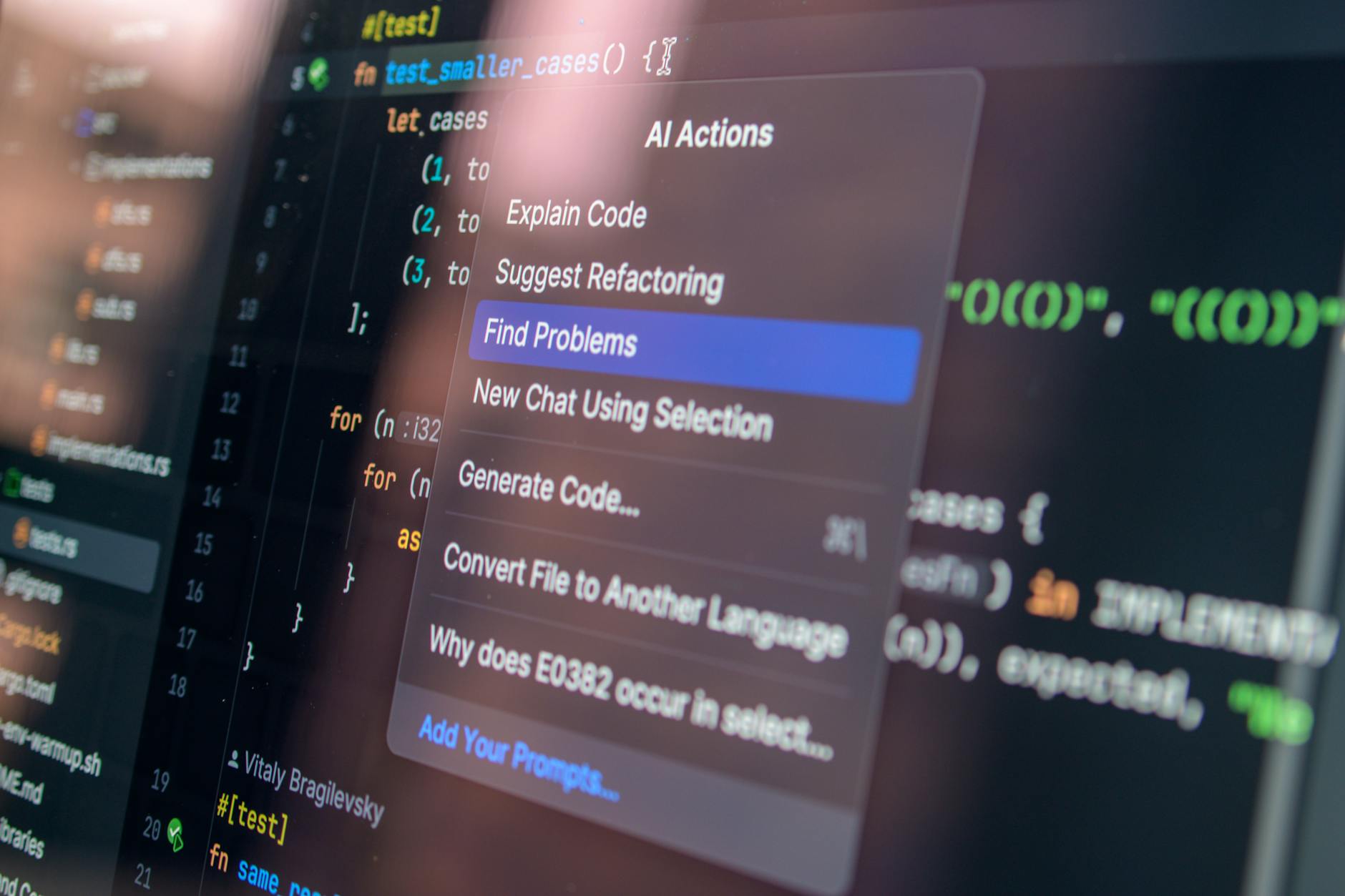

The Tools Compared

| Tool | Strengths | Weaknesses | Price |

|---|---|---|---|

| CodeRabbit | PR-native workflow, line-by-line inline comments, incremental reviews on follow-up commits | Limited to PR review only; cannot analyze the broader codebase context | Free (public repos) / $12/mo (private) |

Claude Code /review | Deep multi-file analysis, OWASP-aware security scanning, understands project-wide conventions | Requires manual invocation per review; no PR integration | API pay-per-use |

| GitHub Copilot Review | Native GitHub integration, fast turnaround, surfaces issues as you write code | Surface-level analysis; frequently misses logic errors and architectural concerns | Included in Copilot subscription |

| CodeScene | Behavioral code analysis, team dynamics insights, technical debt hotspots over time | Not a line-level reviewer; different category of tool entirely | Free (OSS) / Paid tiers |

Among these, CodeRabbit and Claude Code complement each other well. CodeRabbit runs automatically on every PR and catches the obvious issues in seconds. Claude Code handles deeper reviews when a change is complex or security-sensitive.

What AI Review Reliably Catches

After observing hundreds of AI-reviewed pull requests, these categories consistently produce accurate findings:

- SQL injection vectors in dynamically constructed queries

- Cross-site scripting vulnerabilities in user-rendered content

- Missing null checks and undefined property access

- Inconsistent error handling patterns across similar functions

- Unused imports, dead code blocks, and unreachable branches

- Style guide violations (naming conventions, spacing, import ordering)

- Missing test coverage for newly introduced code paths

- Race conditions in async operations lacking proper locking or atomicity

On one project, CodeRabbit flagged a Stripe webhook handler that was not verifying signature hashes — a vulnerability that would have allowed anyone to trigger payment confirmations. This is the kind of issue that mechanical review catches and exhausted human reviewers sometimes skip.

What AI Review Still Misses

These categories remain firmly in human territory:

- Business logic correctness — does the code implement the right behavior for edge cases?

- Architectural fit — does this change align with the system's design principles or does it introduce a conflicting pattern?

- User experience implications — will this backend change degrade frontend performance or create confusing UI states?

- Subtle performance bottlenecks — N+1 queries hiding in ORMs, memory leaks in long-running processes, inefficient data structures

- Whether the code solves the right problem at all — the most important question, and one no AI can answer

Do not let AI review create false confidence. A clean AI review report does not mean the code is correct. It means the code is free of the specific, mechanical issues AI tools know how to detect. Architectural and business-logic review still requires a human who understands the system.

A Workflow That Works

After iterating through several approaches, this five-step workflow produces the best results:

- Developer writes code and runs local checks (linting, type checking, unit tests).

- AI first-pass review runs automatically — CodeRabbit on PR, or Claude Code

/reviewinvoked manually for complex changes. This catches mechanical issues in under a minute. - Human reviewer reads the AI comments but focuses their own attention on architecture, design patterns, and business logic — the areas where human judgment is irreplaceable.

- AI generates test suggestions for any uncovered code paths identified during review.

- Both reviews resolved, the PR merges with considerably higher confidence than human review alone could provide.

The Bottom Line

The right model for AI code review in 2026 is not replacement; it is augmentation. AI serves as the first-pass filter — fast, tireless, and thorough on the mechanical categories. The human reviewer serves as the final decision-maker — bringing context, judgment, and accountability that no AI tool currently possesses.

Neither should work alone. Together, they produce reviews that are faster, more thorough, and more consistent than either could achieve independently.

Advertisement

Google AdSense — ad code will be placed here after approval

Was this article helpful?

More in Coding

3 ARTICLESFrom Vibe Coding to Agentic Engineering: What 18 Months of AI Coding Progress Actually Means

Andrej Karpathy coined 'vibe coding' in early 2025. By mid-2026, it has evolved into agentic engineering. Here is the story of the most consequential shift in how software gets built — and where it goes next.

CodingCodex vs Cursor 3 vs Claude Code: Which AI Coding Agent Actually Ships the Best Code?

Three AI coding agents, three radically different philosophies. We spent two weeks building the same project with Codex, Cursor 3, and Claude Code. One tool produced the best code. Another produced the best experience.

CodingGitHub Copilot vs Cursor vs Claude Code in 2026: I Tracked My Productivity for 30 Days

I logged every AI-assisted coding session for a month. Copilot saved me keystrokes. Cursor saved me context-switching. Claude Code saved me from shipping bugs. Here's the data.