AI-Assisted Testing in 2026: Generate, Run, and Fix Tests Automatically

How to use AI to generate test suites from descriptions, maintain tests as code changes, and achieve 80%+ coverage with minimal manual effort.

Advertisement

Google AdSense — ad code will be placed here after approval

Testing is the most AI-amenable activity in software development. Tests are structured, repetitive, and follow predictable patterns — exactly the kind of work where AI tools excel. In 2026, AI-assisted testing has progressed from "generates plausible test scaffolding" to "generates, runs, debugs, and maintains test suites with minimal human intervention."

I have used AI-generated tests across three production projects over the past year. The results are not perfect, but the productivity shift is undeniable: tests that would take hours to write manually appear in minutes, and test maintenance — traditionally the killer of automated testing initiatives — has become dramatically lighter.

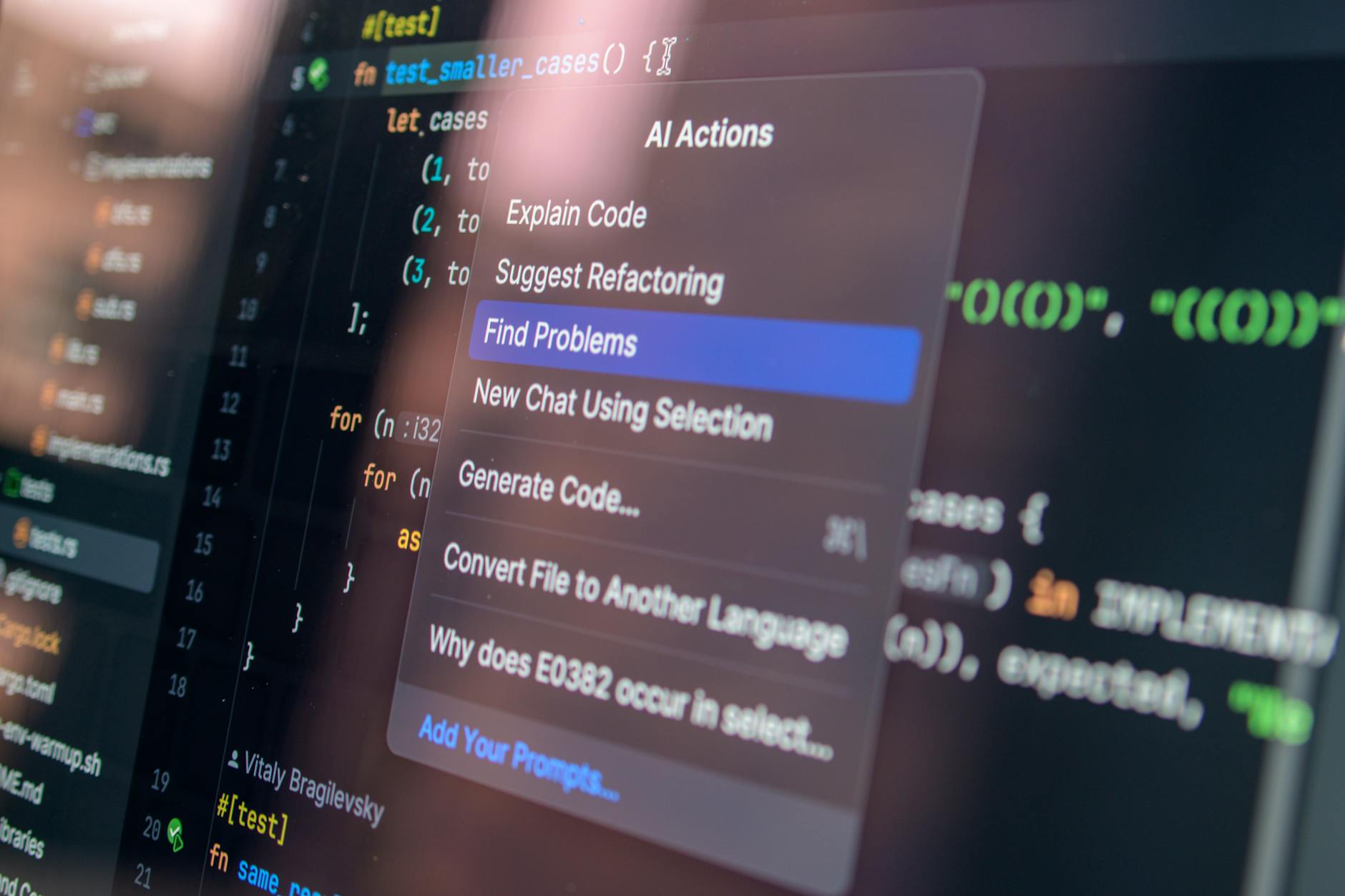

The AI Testing Stack

| Layer | Tool | AI Integration | Effectiveness |

|---|---|---|---|

| Unit Tests | Cursor / Claude Code | Generate from function signatures and behavior descriptions | High — AI excels at structured, isolated tests |

| Integration Tests | Claude Code | Generate API test suites from route definitions and schemas | Medium-High — requires schema accuracy |

| E2E Tests | Playwright + Claude Code | Generate browser tests from user flow descriptions | Medium — selectors can be flaky, needs review |

| Visual Regression | Percy + AI | AI-powered diff detection with intelligent auto-approval | High — reduces false positives significantly |

E2E Test Generation in Practice

The workflow that has proven most effective is deceptively simple. Describe the user flow in plain English, and Claude Code with Playwright generates a complete, runnable test file.

Input example:

"User logs in with valid credentials, navigates to the dashboard,

creates a new project, invites a team member by email, and verifies

the invitation appears in the pending invitations list."

The generated test includes proper selectors (using data-testid attributes where they exist), waits for asynchronous operations, assertions at each step, and error handling for common failure modes. A flow that would take 45 minutes to write manually arrives in roughly two minutes.

The tests are not as polished as hand-crafted ones — AI tends to produce overly specific selectors and can miss edge cases around loading states and network failures. But they achieve roughly 70-80% of the quality in about 10% of the time. For most indie and small-team projects, that trade-off transforms the economics of testing from "we cannot afford comprehensive tests" to "we cannot afford not to have them."

Where AI Testing Excels

Test generation from existing code. Point Claude Code at an untested module and ask it to write comprehensive tests. It reads the function signatures, traces the logic branches, identifies edge cases (null inputs, empty arrays, boundary values), and generates tests that typically achieve 85%+ branch coverage on the first pass.

Test maintenance during refactoring. This is the breakthrough use case. When you refactor a module, AI tools can update every affected test file simultaneously — renaming functions, updating import paths, adjusting expected values for changed return types. What used to be hours of tedious test updates becomes a single prompt.

Regression test generation for bug fixes. When you fix a bug, ask AI to write a regression test that specifically reproduces the original failure. This ensures the bug stays fixed — and it captures the exact reproduction steps while the context is fresh.

Where AI Testing Falls Short

Three limitations to watch for: AI-generated tests can miss subtle edge cases, produce flaky selectors in E2E tests, and test implementation details rather than observable behavior. Human review remains essential for critical paths — especially payment flows, authentication, and data integrity operations.

AI testing tools are strongest when used for test scaffolding, coverage expansion, and maintenance automation. They are weakest when asked to design a test strategy or determine what should be tested. That judgment call — what constitutes a critical path, what edge cases matter, what level of coverage is sufficient for a given risk profile — remains a human responsibility.

Use AI to write and maintain the tests. Use your own judgment to decide what needs testing in the first place.

Getting Started

Start with one test layer. I recommend E2E tests with Playwright and Claude Code — they provide the highest confidence per test and the most dramatic time savings. Describe your top three user flows, generate tests, run them, fix any flaky selectors, and commit. That single workflow gives you a safety net that most small projects lack entirely.

Advertisement

Google AdSense — ad code will be placed here after approval

Was this article helpful?

More in Coding

3 ARTICLESFrom Vibe Coding to Agentic Engineering: What 18 Months of AI Coding Progress Actually Means

Andrej Karpathy coined 'vibe coding' in early 2025. By mid-2026, it has evolved into agentic engineering. Here is the story of the most consequential shift in how software gets built — and where it goes next.

CodingCodex vs Cursor 3 vs Claude Code: Which AI Coding Agent Actually Ships the Best Code?

Three AI coding agents, three radically different philosophies. We spent two weeks building the same project with Codex, Cursor 3, and Claude Code. One tool produced the best code. Another produced the best experience.

CodingGitHub Copilot vs Cursor vs Claude Code in 2026: I Tracked My Productivity for 30 Days

I logged every AI-assisted coding session for a month. Copilot saved me keystrokes. Cursor saved me context-switching. Claude Code saved me from shipping bugs. Here's the data.